Naive Principal Component Analysis

Principal Component Analysis is a type of unsupervised learning. Behind the PCA there is a geometric interpretation of the data; specifically, a principal component is a direction in which the data has the largest variation.

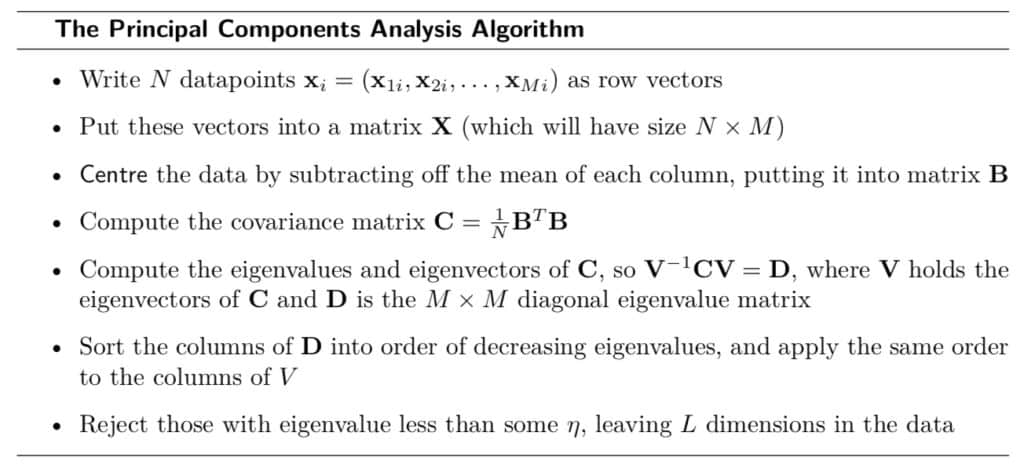

The Principal Component Analysis’ algorithm first centres the data by demeaning, then it places an axis along the direction of largest variation, and repeats for all the orthogonal axes to the first one. As a result, most of the variation is along the axes and the covariance matrix is diagonal (i.e., each component represents a new and uncorrelated variable). In general, the very first components are the most relevant.

The eigen-values determine how much “stretch” is required along their corresponding eigenvector (axis or component). The larger the eigen-values, the larger the variation along that component and, as a a consequence, the higher its relevancy.

According to the algorithm above, a PCA is employed to conduct a model-free factor analysis to infer the structure of portfolio returns instead of relying on established factor models.

The Bats Global Markets includes equities, options, and foreign exchange challenging the dominance of the New York Stock Exchange (NYSE) and Nasdaq in terms of market capitalization. Since 2016, Bats has become the largest ETFs exchange. Although a naive approach, the SPY index is compared against a PCA of the stocks included in the S&P500 (i.e., usually a representative index within the exchange is preferred).

In the example, the two components represent 80% of variation:

Finally, I attempt to interpret the two components as factors through the elements of the two components as represented below:

First Component: long small caps in developed countries and value in growth countries, short momentum and alternatives in growth countries

Second Component: long multi factor in growth countries and small caps in developed countries, short dividend, momentum, alternatives, and fixed income in emerging markets

References

Christopher M. Bishop

Bishop, C. M. (2006). Pattern Recognition and Machine Learning

Marsland, S. (2015). Machine Learning