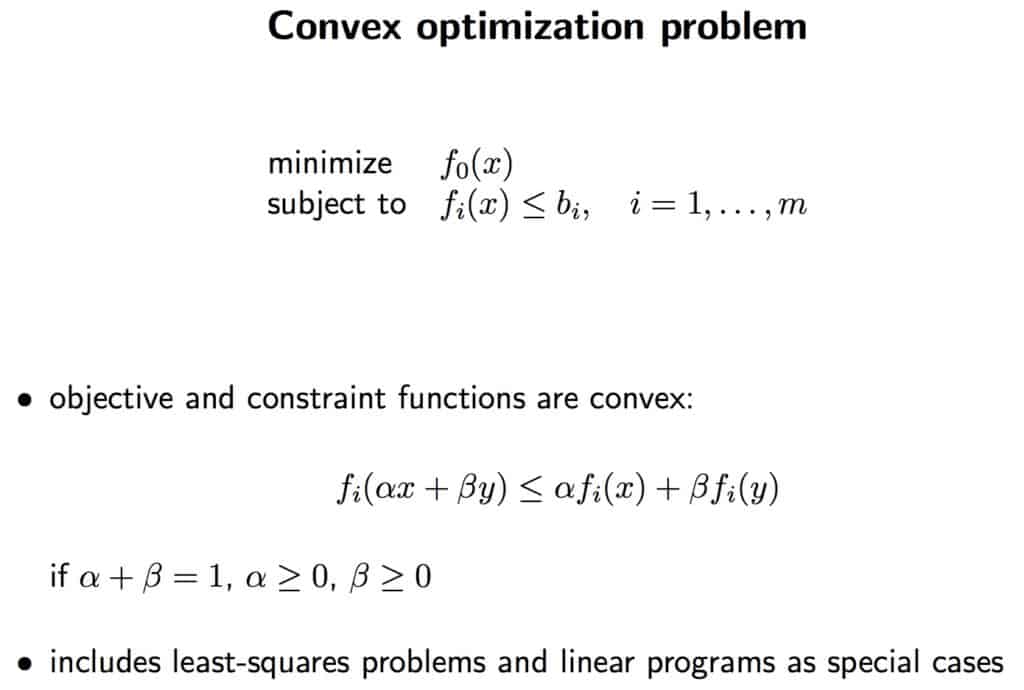

Convex Problems

Many problems in engineering analysis and design can be cast as convex optimization problems, often non-linear and non-differentiable. Specifically, convex optimization problems can be solved by some modern methods such as subgradient projection and interior point methods or by some old methods such as cutting plane methods, ellipsoid methods, and subgradient methods.

Convexity in itself is a property of functions and sets; indeed, a convex function is necessarily continuous in its domain and enjoys strong differentiability properties. For instance, all directional derivatives of such a function must exist at all points in the domain and, as a result, the function has strong curvature restrictions (it curves up, while affine functions have zero curvature and concave functions curve down) that result in handy properties of its first- and second-derivatives.

Specifically, in optimization theory convexity has a special place because when an optimization problem meets suitable convexity conditions it is possible to use necessary first-order conditions for local optima as sufficient conditions and in case of strict convexity conditions the solution is known to be unique.

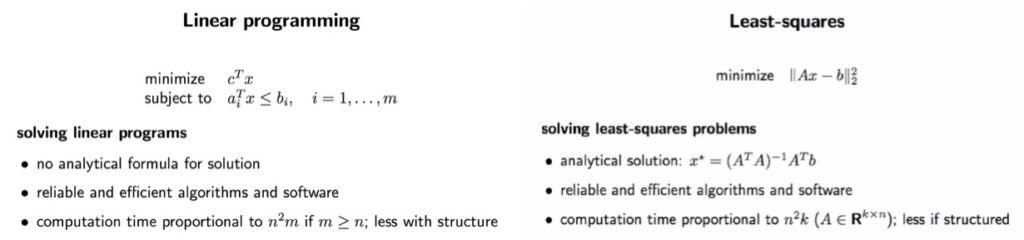

Linear optimization is a special case of mathematical optimization in which the problem is linearly constrained (i.e., using linear functions or affine transformations). It can be solved with least squares by observing that the search direction can be obtained via the solution of a linear least squares subproblem.

For this reason (but mostly because their representation is barely convex and an approximated solution is acceptable), a number of practical problems are transformed in linear form and solved with linear programming.